Doing The Thing: From Mechanical Turk to Mech Interp

For motivation/momentum purposes: Started AI Safety journey 160 days ago with current daily streak of "doing the thing" for 1 consecutive days!

Updates

-

Extending and reproducing Anthropic's MOLTs (sparse Mixtures of Linear Transforms) as part of Georg Lange's SPAR stream on Automating Circuit Interpretability with Agents (writeup, github)

-

Reproducing Transluce's Predictive Concept Decoders (PCD) to "read the mind" of an AI model through decoding activations of a Subject Model into natural language via a Sparse Encoder and LLM Decoder Model [github]

- Started Karpathy-inspired video tutorials PCD reproduction line-by-line to make AutoInterp research more accessible [video walkthrough]

-

Playing with Fire: Proposal for a Sharing AI Safety Research with At-Risk Youth through Games [video, documentation]

- 3-step pipeline to making AIS research accessible to broader community (e.g. at-risk teenagers using AI for mental health counseling); “AIS researchers are only one piece of the puzzle” ethos

-

Reflections from first 3 weeks of 2026 abroad at LISA and attending Oxford AI Safety Initiative's ARBOx including work in progress Theory of Change

TLDR of Things I've Done Since Starting My AI Safety Journey 160 Days Ago

Projects

-

Cross-Linguistic Alignment: Does LoRA Fine Tuning a model on a task (e.g. respond in all CAPS) translate cross-linguistically? (Summary && Github)

-

Reproducing Neo et al. 2024 Interpreting Context Look Ups

-

Multilingual Semantics Probe: Looking for Steering Vectors for semantically ambiguous sentences in English but not Mandarin

-

Syntactic Dependencies in Transformers: Attention Patterns for Balanced Parentheses (Dyck) Language (Github)

Programs

-

ARBOx: 2 weeks of compressed ARENA curriculum; project on Cross-linguistic generalization of fine-tuning

-

Attended NeurIPS Mech Interp 2025 Workshop and found some cool takeaways!

-

Started being mentored by Sudhanshu Kasewa from 80,000 Hours

What is this?

Here it goes! I'm Kyle, a 4th year Computational Linguistics student at USC. This is the start of my AI Safety journey! I am very greatful to be mentored by Prof. Khalil Iskarous at USC.

I learned about AI Safety from the Seattle Llama4 Hackathon on June 21st, 2025 where I learned of AI 2027. After finishing an awesome summer of engineering, I realized the problems which excite me the most lie at the crossroads of engineering and science (computation and linguistics).

The urgent need for understanding increasingly capable AI models coupled with a burning passion for working at the interdisciplinary intersection of NLP, linguistics, and engineering at scale has sharpened my goal: to become an AI Safety researcher in Mechanistic Interpretability.

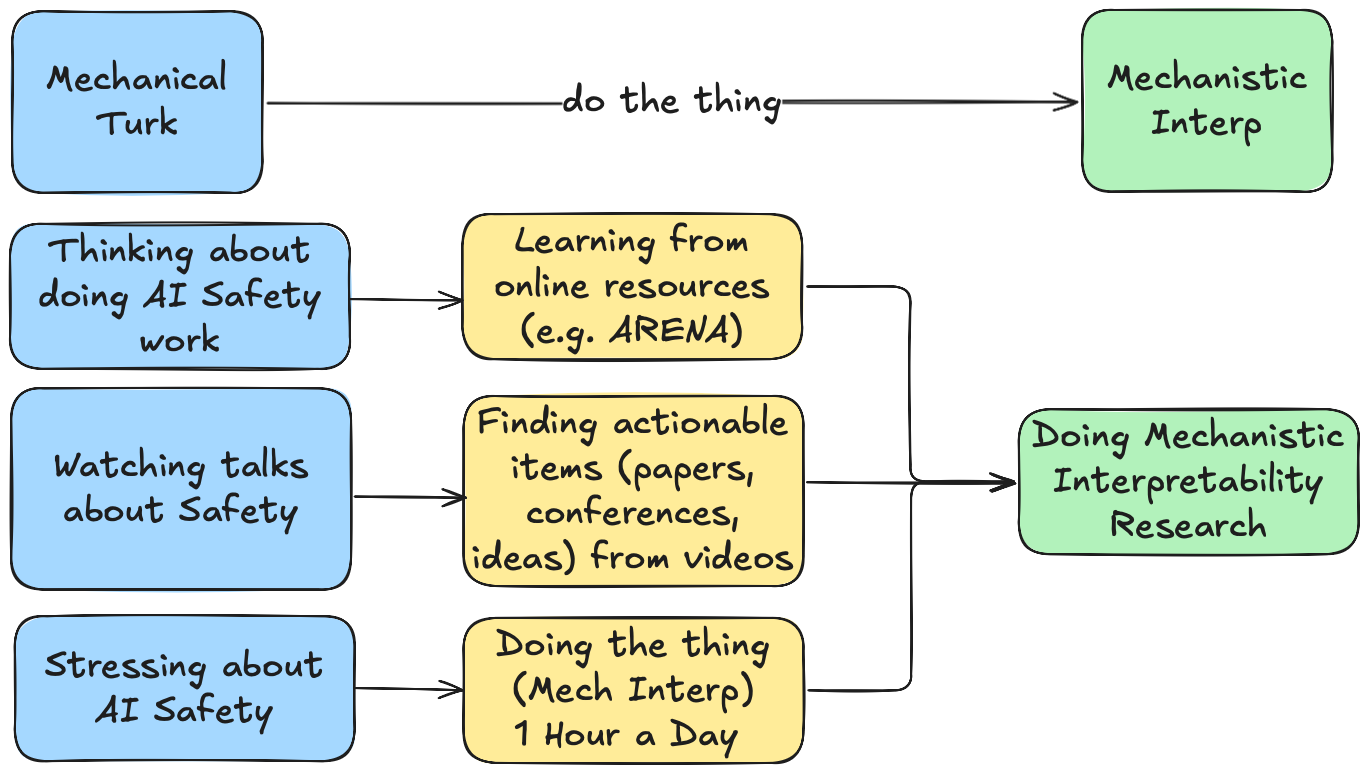

Working Backwards

Sometimes (often) I get analysis paralysis or want to wait for the perfect {time, situation, background, preparation} to start which makes it difficult to get into pursuing my goals (and dreams). So this time around, I know my goal to become an AI Safety/Mech Interp researcher! After finding David Quarel's do the thing I decided that this site is a place where I will keep myself acountable for doing the thing.

- Doing --> Working through math problems, reading papers, writing down lists of possible intersections of linguistics and NLP

- The Thing --> Any of the above for at least 1 hour every day, with consistent (though not perfect) progress.

What is dlog?

I am starting this daily log or dlog where every day I will document my progress. I hope that the daily act of documenting will make me more resilient and help prove to myself how badly I want to be an interpetability researcher. With the help of AI, a macro pulls the daily logs into the summary you see below:

Extra Stuff (click me)

Wait What's Computational Linguistics?

As a Computational Linguistics student, I see Computational Linguistics as three parts:

- Linguistics = study of human language processing / cognition

- Mechanistic Interpretability = study of LLM language processing / cognition

- Computational Linguistics = Interdisciplinary approach to studying LLM language processing

The Computational Linguistics topics that pull me at 9.8 m / s^2 are concepts like Information Theory and Probabilistic Phonology in addition to Theoretical Machine Learning and NLP.

What does AI Safety and Mechanistic Interpretability mean to me?

- I hope that having deep knowledge in both the fields of linguistics and ML/NLP can help me build a more holistic understanding of LLM cognition and language processing.

- I see Mechanistic Interpretability as a sort of psycholinguistics (the study of real-time processing of language) for LLMs.

- Furthermore, I see Mechanistic Interpretability as a foundational basis for understanding AI systems. Perhaps understanding models (such as like biological organisms) can support the other branches of AI Safety (alignment, control, governance, and more).

dlog: 160 Days and Counting

Total time focused so far: 505 hrs 22 mins throughout 160 days of learning

Below are the latest updates (auto-generated).

Latest entries

-

2026-05-26 | 0 hr 0 min | Goal:

Writeup official proposal and research questions for investigating the syntax of LLMsMaxwell

-

2026-05-22 | 2 hr 0 min | Goal: Writeup and Proposal of Syntax of LLMs Proposal [see doc]

Writeup official proposal and research questions for investigating the syntax of LLMsMaxwell

-

2026-05-21 | 2 hr 0 min | Goal: Train Toy Seq2Seq Transformer (Vaswani et al.) on translation from English to Zapanese

Toy transformer can generalize to unseen examples of same depth but not unseen recursive depths (using complementizer phrases)Maxwell

-

2026-05-20 | 2 hr 0 min | Goal: Develop Zapanese Context Free Grammar [see PR]

Design Grammar and script to translate from Head Initial (SVO) to Head Final (SOV) languagesMaxwell

-

2026-05-19 | 2 hr 50 min | Goal: MOLT Transfer of Knowledge

Wrote Transfer of Knowledge for future MOLT researchMOLTs

-

2026-05-09 | 4 hr 0 min | Goal: Writeup documentation on follow up steps for Instruction Tuned MOLTs training

Also tried to evaluate GPT-2 MOLTs which had high MSE, will investigateMOLTs

-

2026-05-08 | 9 hr 0 min | Goal: Realized Fatal Flaw in MOLTs Training

Trained MOLTs for instruction tuned model without chat data and chat templateMOLTs

-

2026-05-07 | 9 hr 0 min | Goal: Continue Training Gemma3-4B-IT MOLTs

Use Multi-GPU training to train Gemma3-4B-IT MOLTsMOLTs

-

2026-05-06 | 9 hr 0 min | Goal: Train Gemma3-4B-IT MOLTs

Use Multi-GPU training to train Gemma3-4B-IT MOLTsMOLTs

-

2026-04-28 | 4 hr 50 min | Goal: Steering with MOLT Features and Top Activating prompts dashboard; see also PR for MOLTs to master and Wandb Runs

Feedback from Georg on using a translation task to verifynecessaryandsufficientconditionsMOLTs

Todo List

The todo list started getting too beefy and has been moved to its own todo page!